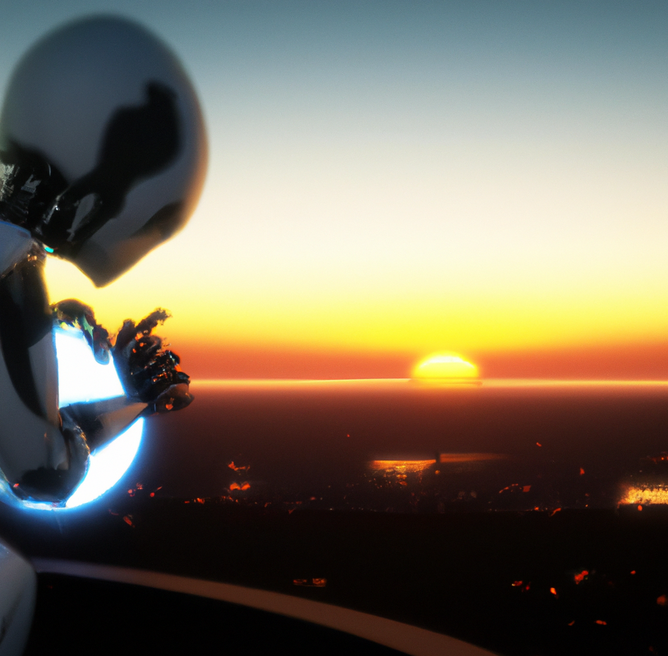

Hundreds of experts sound alarm on AI's existential threat

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

This is the statement signed by more than 350 of signatories at the Centre for AI Safety (CAIS), composed of world-famous AI scientists, professors, COOs and many other notable figures.

Among them are Sam Altman, OpenAI's chief executive; Demis Hassabis, Google DeepMind's chief executive; and Dario Amodei, Anthropic's chief executive, representing three of the world's leading AI companies.

Regulation of AI a necessity

The CAIS commentary states, “AI experts, journalists, policymakers, and the public are increasingly discussing a broad spectrum of important and urgent risks from AI,” and that the statement, “aims to overcome this obstacle and open up discussion.

It is also meant to create common knowledge of the growing number of experts and public figures who also take some of advanced AI’s most severe risks seriously.”.

OpenAI's Sam Altman has repeatedly highlighted the potential perils of AI, from sweeping disinformation, unforeseeable economic shocks and large-scale cyber attacks, and now - potential human extinction.

To address the potential adverse consequences for humanity posed by increasingly powerful AI, Altman has stressed the importance of involving regulators and society at large to act as safeguard.

He has however, also underscored the fact that AI remains a tool under human control, functioning solely through human guidance and input.

From this position, many have argued that such calls are nothing but fear-mongering, or misunderstandings at best, but the experts beg to differ.

In a recent Lex Friedman podcast, Altman acknowledged that having a certain level of apprehension toward AI is rational, stating that it is "crazy not to be a little bit afraid," and that he believes it is positive that we exhibit a healthy dose of wariness toward it.

Superintelligence to be achieved in the next 5 years?

During a recent interview with The Wall Street Journal, Demis Hassabis, CEO of Google DeepMind, expressed his belief that AI could attain cognitive abilities at a level on par with humans within the next five years.

Acknowledging the remarkable advancements made in recent years, Hassabis stated, "I don't see any reason why that progress is going to slow down.

"I think it may even accelerate. So I think we could be just a few years, maybe within a decade away," indicating his expectations for further significant strides in the field.

The potential implications of AI surpassing human general intelligence and attaining superintelligence include the challenge or even impossibility of human control, thereby posing a risk of human extinction.

Silver linings

AI also, it has to be recognised, offers numerous advantages across various domains. These include the automation of monotonous tasks, resulting in increased efficiency and productivity, to name a few.

AI enables businesses to operate at a faster pace, opens doors to new capabilities and business models, and enhances the overall customer experience.

It also aids in research and data analysis, contributing to improved decision-making processes and reducing the likelihood of human error. AI also plays a vital role in the medical field by driving advancements and assisting with repetitive tasks.

It can assume risks on behalf of humans, leading to safer environments. Moreover, AI has the potential to create a next-generation workplace that promotes seamless collaboration between enterprise systems and individuals. This, in turn, allows for the allocation of resources towards higher-level tasks, further enhancing productivity and innovation.

On the flip side of the coin however, it must be recognised that the potential threats are very real.

The risks associated with intelligent and autonomous machines are diverse and can lead to unintended and catastrophic consequences. It is worth noting that experts hold differing opinions regarding the extent of the threat superintelligent AI poses to humanity.

Some anticipate that networked AI will enhance human effectiveness while simultaneously jeopardising human autonomy, agency and capabilities.

Understanding the possible drawbacks of AI and effectively managing its risks is crucial. Ultimately, the goal should be for AI to enhance human intelligence rather than replace it.

- GPT-4 Turbo: OpenAI Enhances ChatGPT AI Model for DevelopersMachine Learning

- Demis Hassabis Knighthood: From Tech Prodigy, to AI LeaderMachine Learning

- TacticAI: Google DeepMind Pioneer a Sports-Led AI AssistantAI Strategy

- Anthropic Unveils Claude 3: Its Most Powerful AI Chatbot YetMachine Learning