Meta Launches AI Tools to Protect Against Online Image Abuse

Meta is in the testing stages of new features designed to help protect young people from sextortion and intimate image abuse.

The AI tools seek to make it more difficult for potential scammers and cybercriminals to find and interact with teenagers. Additionally, Meta is testing new ways to help people spot scams and encourage them to report them.

In order to help other technology companies disrupt this type of criminal activity across the Internet, Meta has also started sharing more signals about malicious social media accounts via the Lantern platform, of which it was a founding member.

This news comes very quickly after Meta unveiled a string of new announcements as part of its growing investment into AI infrastructure, including its latest AI chip designed to drive better experiences of its products and services.

Using AI to identify and block cybercriminals

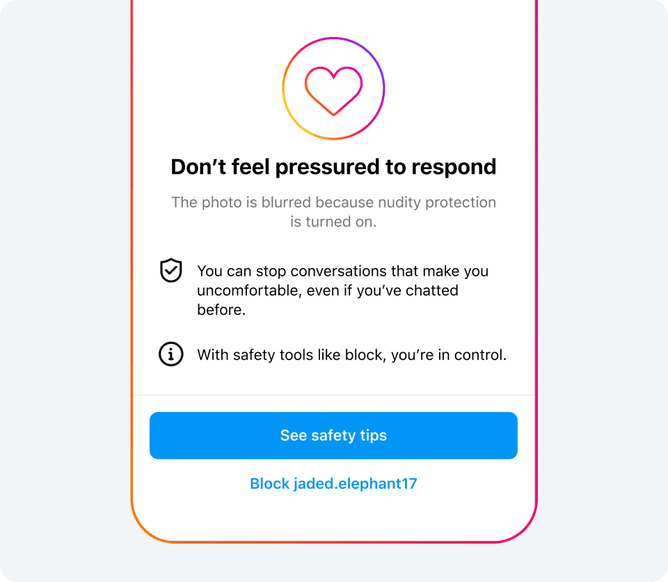

A significant feature of these new tools is that Meta, which owns Facebook, Instagram and WhatsApp, has confirmed that it will begin testing a nudity filter in Direct Messages (DMs) on its Instagram platform.

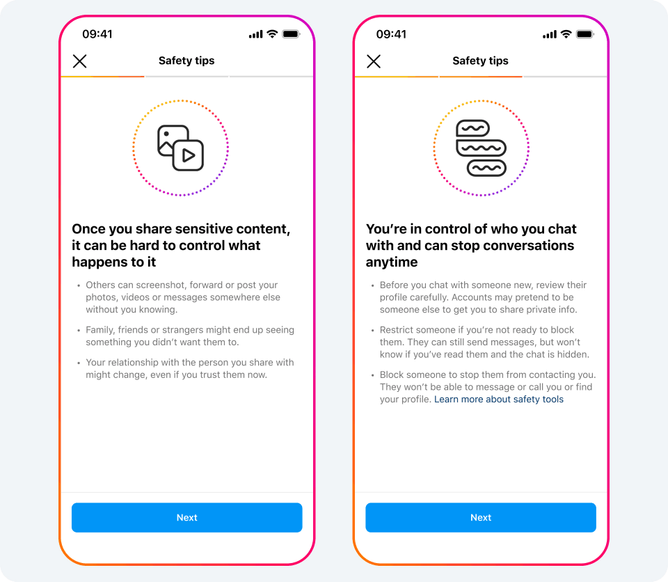

The filter will warn users if an explicit image has been sent to them, or a pop up message will warn them of the dangers of sending such content to others online. It will be a default feature for users under the age of 18, automatically blurring images that AI detects as containing unwanted content.

The tool is designed to utilise on-device machine learning to analyse if an image contains nudity. This means that it will work inside end-to-end encrypted chats - so Meta can only see an image if a user chooses to report it to the company.

Following on from this, Meta has also announced it is testing new detection technology to identify accounts potentially engaging in sextortion scams. The company will limit their ability to interact with everyone across the platform, but is designed to protect younger users in particular.

Suspicious accounts will be hidden or even blocked by Meta, with anyone who has engaged with the account being sent a pop-up message directing them to support.

“Financial sextortion is a horrific crime,” Meta says in its announcement. “We’ve spent years working closely with experts, including those experienced in fighting these crimes, to understand the tactics scammers use to find and extort victims online, so we can develop effective ways to help stop them.

“These updates build on our longstanding work to help protect young people from unwanted or potentially harmful contact.”

Building a responsible future for technology

Historically, Meta’s teams have always worked to investigate and disrupt criminal networks online. It does this by disabling their accounts and reporting them to law enforcement, which has included several networks in the last year alone.

The company is now committed to developing technology to help identify where these accounts may be potentially engaging in sextortion scams. This will be based on a range of signals that could indicate criminal behaviour.

Meta has been working to ensure that it remains at the forefront of responsible AI. In January 2024, the company announced that it had commenced training on Llama 3, the next generation of its generative AI (Gen AI) model, to develop artificial general intelligence (AGI).

“Our long term vision is to build general intelligence, open source it responsibly and make it widely available so that everyone can benefit,” Mark Zuckerberg said at the time.

“Building the best AI assistants, AIs for creators, AIs for business and more - that needs advances in every area of AI, from reasoning to planning to coding to memory and other cognitive abilities.”

******

Make sure you check out the latest edition of AI Magazine and also sign up to our global conference series - Tech & AI LIVE 2024

******

AI Magazine is a BizClik brand

- Pope set to Attend G7 Summit and Highlight AI ChallengesAI Strategy

- Parexel and Palantir: Powering Positive Healthcare OutcomesAI Applications

- Sundar Pichai: Google Seeks to Expand its AI OpportunitiesAI Strategy

- Mistral AI Continues to Push Forward Competitive European AIMachine Learning