Artificial Intelligence application bridges cyber skills gap

As technology becomes more advanced, cyber crime has never been a more prominent threat to businesses and other professional organisations.

With the cyber crime cost expected to reach US$6trn globally (US$10.5trn by the end of 2025) it is clear that cybersecurity is becoming a key focus for the future of organisations all over the world.

Click here to see full report - ‘2021 Report: Cyber warfare in the C-Suite’

Security Analysts can focus on more sophisticated attacks

While criminals are becoming more sophisticated, AI is continuously developing to tackle the various threat levels.

Due to the increased amount of data available, AI is able to manage all menial tasks within organisations and find links between relatively minor cyber attacks much faster, than the human analyst, and with no risk of fatigue or human error.

Taking the pressure off cybersecurity personnel will allow many organisations to put their physical resources into managing the larger, more significant attacks.

The skills gap

The self-development capabilities of AI is vital during a current skills shortage in the cybersecurity profession.

And as the demand for cybersecurity positions is rapidly increasing, technology is moving faster than ever in order to bridge the gap in preventing cyber crime.

A 2019 report by Burning Glass Technologies has found that:

- The quantity of cybersecurity job postings has increased by 94% since 2013.

- Despite paying 16% more, cybersecurity jobs take 20% longer to fill than other IT jobs.

- More than half of jobs demanding cybersecurity skills are in fact other IT roles, where security is only part of a broader job description.

- For each cybersecurity opening, there was a pool of only 2.3 employed cybersecurity workers for employers to recruit.

- The industry is increasingly turning to automation for solutions. Demand for automation skills in cybersecurity roles has risen 255% since 2013 and demand for risk management rose 133%.

- Public cloud security and knowledge of the Internet of Things are projected to be the fastest-growing skills in cybersecurity over the next five years.

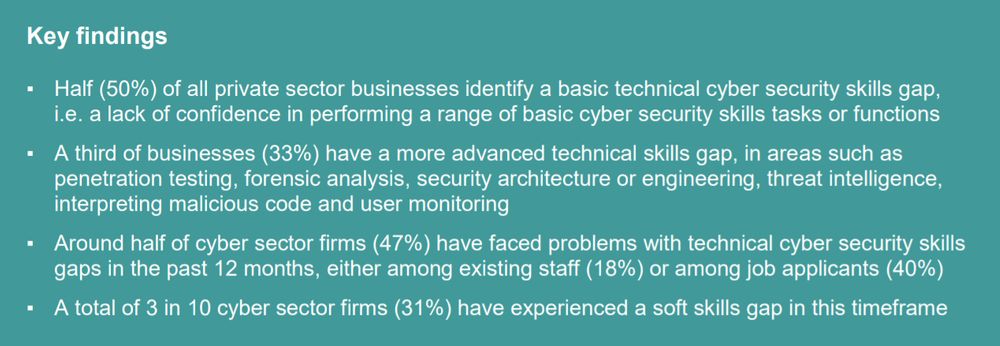

Confidence in the workforce

A recent government report ‘Cybe r security skills in the UK labour market 2021: findings report’ talks about the current state of cybersecurity recruitment in the UK.

Data collated in this report shows how confident personnel are in various cybersecurity tasks and is said to be in unison with the findings from 2018 and 2020.

“The areas where skill gaps are most prevalent are in setting up configured firewalls, storing or transferring personal data and detecting and removing malware, which is consistent with the 2018 and 2020 results. Nevertheless, only a minority of cyber leads across the business population say they are not confident in carrying out each of these tasks”. - Ipsos MORI - Cyber security skills in the UK labour market 2021: findings report