LeddarTech and Seoul Robotics partner on Lidar AI vision

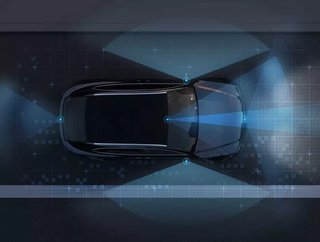

LeddarTech, a provider of environmental sensors for use in autonomous vehicles, has announced a partnership with AI computer vision firm Seoul Robotics.

The Quebec City-based LeddarTech is to work with Seoul Robotics, which hails from the South Korean capital, on combining their hardware and software offerings, utilising the latter firm’s Lidar data processing platform.

Sensing with light

Lidar technology was famously spurned by Tesla in its own pursuit of driverless cars, but is nevertheless used by the majority of competitors. The visual equivalent of radar, lidar involves measuring distances by shining a laser on an object and sensing its reflection. Extant since the 1960s, the technology found uses in many geographical pursuits such as surveying before being harnessed for autonomous vehicles.

“By partnering with Seoul Robotics, a respected leader in 3D perception software for LiDAR sensors, LeddarTech and our customers benefit from Seoul Robotics’ advanced and innovative LiDAR-based perception technology,” said Charles Boulanger, CEO of LeddarTech.

“The collaboration yields a solution to greatly ease and accelerate the integration of the Leddar Pixell in a wide range of applications in mobility and industrial markets.”

The Leddar Pixell

LeddarTech’s implementation of the technology is known as the Leddar Pixell, which offers a 180 degree sensor capable of detecting objects and other road users and is intended for use in everything from robotaxis to commercial vehicles.

“Robust LiDAR sensors like the Leddar Pixell require the most advanced 3D perception software to process and interpret data in real time,” said HanBin Lee, co-founder and CEO of Seoul Robotics. “Our industry-leading perception platform, SENSR, enables stronger understanding and interpretation of 3D data, better serving the market with highly detailed, LiDAR-based perception. Through our collaboration in the Leddar Ecosystem, we will provide object detection and classification, as well as speed measurement, direction, and location information without a need for map data, all while reducing cost and increasing efficiency.”