Facebook update AI Habitat simulator improving interactivity

Facebooks AI research department has announced Habitat 2.0, a next-generation simulation platform that lets AI researchers teach machines to navigate through photo-realistic 3D virtual environments and interact with objects just as they would in an actual kitchen, dining room, or other commonly used space.

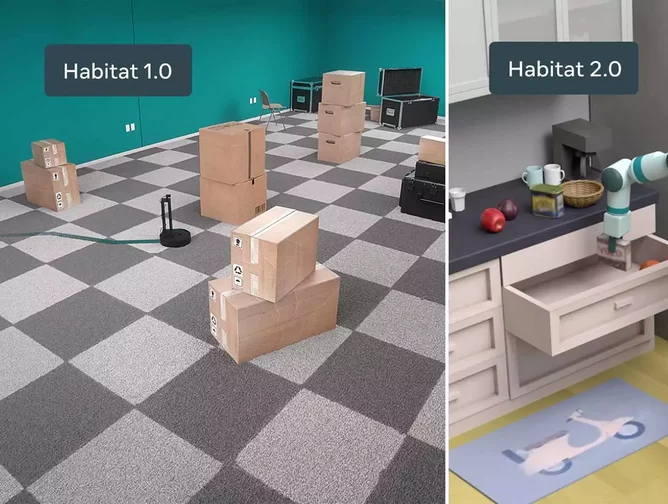

The open-source Habitat simulator was first launched in 2019, giving AI researchers a better way to teach industrial robots how to interact safely and efficiently within the environment in which they’re designed to operate. Facebook’s AI team said at the time that it built the simulator because it’s far easier and more efficient than creating a physical environment in the real world to train robots.

Habitat 2.0 builds on their original open-source release of AI Habitat with even faster speeds as well as interactivity, so AI agents can easily perform the equivalent of many years of real-world actions, such as picking items up, opening, and closing drawers and doors, and much more.

“We believe Habitat 2.0 is the fastest publicly available simulator of its kind available to AI researchers.” researchers wrote in a blog.

Habitat 2.0 also includes a new fully interactive 3D data set of indoor spaces and new benchmarks for training virtual robots in these complex physics-enabled scenarios. With this new data set and platform, AI researchers can go beyond just building virtual agents in static 3D environments and move closer to creating robots that can easily and reliably perform useful tasks like stocking the fridge, loading the dishwasher, or fetching objects on command and returning them to their usual place.

Habitat-Matterport dataset

Alongside Habitat 2.0, Facebook is releasing a dataset of 3D indoor scans co-created with Matterport. The Habitat-Matterport 3D Research Dataset (HM3D), is a collection of 1,000 Habitat-compatible scans made up of “accurately scaled” residential spaces such as apartments, multifamily housing, and single-family homes, as well as commercial spaces including office buildings and retail stores.

“Until now, this rich spatial data has been glaringly absent in the field, so HM3D has the potential to change the landscape of embodied AI and 3D computer vision,” said Dhruv Batra, Research Scientist at Facebook AI Research. “Our hope is that the 3D dataset brings researchers closer to building intelligent machines, to do for embodied AI what pioneers before us did for 2D computer vision and other areas of AI.”

“We are excited to collaborate with Facebook as we provide the academic and research communities access to this unique spatial dataset that is sure to impact how we work and live,” said Conway Chen, Vice President of Business Development and Alliances at Matterport. “With more than five million spaces captured with the Matterport platform, we are the only company that can offer a diverse library of high-resolution, data-rich digital twins of various styles, sizes, and complexities from across the world. HM3D can also be used more broadly by academia, and we can’t wait to see what innovations emerge.”

What’s next?

In the future, Habitat will seek to model living spaces in more places around the world, enabling more varied training that takes into account cultural- and region-specific layouts of furniture, types of furniture, and types of objects.

“Our experiments suggest that complex, multi-step tasks such as setting the table or taking out the trash are significantly challenging. Although we were able to train individual skills (pick, place, navigate, open drawer, etc) with large-scale model-free reinforcement learning to reasonable degrees of success, training a single agent that is able to accomplish all such skills and chain them without cascading errors remains an open challenge. We believe that HAB presents a research agenda for interactive embodied AI for years to come.”