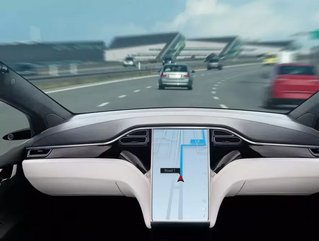

UK plans to allow self-driving autonomous vehicles on roads

The UK’s Department For Transport has announced that self-driving vehicles could be present on British roads by the end of the year.

There has been some controversy over the terminology, with only Automated Lane Keeping Systems (ALKS) to be initially allowed at speeds of up to 37mph on motorways (essentially in slow traffic situations). Such systems only reach level 2 of the Society of Automotive Engineers’ (SAE) Levels of Driving Automation Standard, with the targeted level 5 representing complete autonomy at all times. The fear is that referring to such systems as self-driving could lead to complacency on the part of drivers.

The safety of autonomous driving

The perils of autonomous driving have recently made headlines for Tesla, after a fatal crash in Texas that resulted in the deaths of two men. The company’s Autopilot feature was initially blamed, something which the company denies was enabled.

Transport Minister Rachel Maclean said: “This is a major step for the safe use of self-driving vehicles in the UK, making future journeys greener, easier and more reliable while also helping the nation to build back better. “But we must ensure that this exciting new tech is deployed safely, which is why we are consulting on what the rules to enable this should look like. In doing so, we can improve transport for all, securing the UK’s place as a global science superpower.”

The government said that self-driving vehicles could actually boost road safety by reducing human error, which it said was a factor in more than 85% of accidents. It also anticipated the technology could bring an end to urban congestion, with smart coordination between traffic lights and autonomous vehicles, as well as lead to improved public transport.

“Higher levels of automation in the future”

Society of Motor Manufacturers and Traders Chief Executive Mike Hawes said: “Technologies such as Automated Lane Keeping Systems will pave the way for higher levels of automation in future – and these advances will unleash Britain’s potential to be a world leader in the development and use of these technologies, creating essential jobs while ensuring our roads remain among the safest on the planet.”