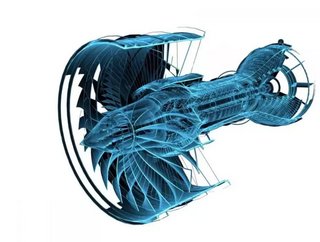

How Physna uses deep learning to search with 3D objects

Cincinnati, Ohio-based Physna uses deep learning to make three-dimensional shapes searchable.

Search engines were the key drivers of the web’s popularity, connecting websites and allowing users to find what they were looking for. Those search engines initially relied on text, and in more recent years have also allowed queries via 2D images - so-called reverse image searches.

Searching with objects

Despite the recent proliferation of 3D files - whether models for 3D printing or CAD software, or photogrammetric scans - it has not been possible to search via geometry. Which is where Physna comes in.

"Physna has enabled a quantum leap in technology by allowing software to truly understand physical 3D data. By merging the physical with the digital, we have unlocked massive and ever-growing opportunities in everything from geometric search to 3D machine learning and predictions," said Paul Powers, CEO and founder of Physna.

Using deep-learning, Physna’s software turns 3D models into computer-understandable data, which then lets engineers and designers find similar models to the parts they need. Its customers include the likes of the Department of Defense, while the company also runs a consumer-facing website powered by the same technology.

The future of search

Since its 2016 foundation, the company has raised $29mn across three funding rounds, with its latest Series B alone raising $20mn. That recently announced round was led by Sequoia Capital, with the participation of Drive Capital.

"Paul and the Physna team have developed a breakthrough platform that enables intuitive search of 3D models for the first time," said Shaun Maguire, partner at Sequoia. "With the amount of 3D data in the world about to explode, Physna will be the way this data is organized and accessed—ultimately, becoming the GitHub for 3D models."

"Having both Sequoia and Drive endorse this next-generation of search and machine learning helps Physna empower even more technical innovations for our customers and the market as a whole," added Powers.

(Image: Physna)